Table of Contents

Introduction

Neutron, introduced within the Folsom release, is a cloud networking controller and a networking-as-a-service project within the OpenStack cloud computing initiative. Neutron includes a set of application program interfaces (APIs), plug-ins and authentication/authorization control software that enable interoperability and orchestration of network devices and technologies within infrastructure-as-a-service (IaaS) environments[1].

What is OpenStack ? OpenStack is a free and open-source software platform for cloud computing, mostly deployed as an infrastructure-as-a-service (IaaS). The software platform consists of interrelated components that control hardware pools of processing, storage, and networking resources throughout a data center[2].

{loadposition content_lock}

Neutron Components

Before we dive into Neutron it is worthwhile doing a quick overview of Neutrons main hardware components and traffic types.

Hardware Components

- Controller Node – Controller nodes host the main API and management services required for OpenStack to function.

- Compute Node – Compute nodes simply run the virtual (Nova) instances.

- Network Node – The network nodes role is to run the network services along with providing the ingress and egress entry points for routed traffic to/from the instances. In other words the network node hosts any legacy (standalone) routers that are created.

NOTE By separating the network and controller nodes, you can ensure that traffic saturation of routed traffic does not impact your API services.

Network Traffic Types

There are 4 main types of network traffic within Neutron. They are Management, API, External and Guest.

- Management – The management network is used for node (compute/controller) communication for internal services.

- API – The API network exposes the various API services such as Glance, Nova etc.

- External – The external network allows for ‘external’ connectivity to the neutron router. In turn allowing (via floating IPs) remote access into the instances.

- Guest – The guest network is used for traffic between instances.

Network Categories

Networks that allow for connectivity to/from instances fall into 2 categories – Provider and Tenant networks.

- Provider Networks are created by the OpenStack administrator in order to provider a physical network mapping. This provides the ability to use a physical gateway for the instances.

- Tenant Networks allow connectivity within a given project. These networks are isolated and not shared by other projects.

The 4 main types of tenant/provider networks. They are:

- Flat – Instances are on the same network, no VLAN tagging or segregation is performed. The network can span multiple hosts.

- Local – Instances are contained upon a single compute host and do not have connectivity to external networks.

- VLAN – Multiple networks can be created that relate to the VLANs configured within your physical network.

- VXLAN and GRE – These networks use either the VXLAN or GRE protocols to encapsulate traffic between nodes by forming logical overlay tunnels between all nodes (compute/controller).

Network Elements

The key elements to these networks are shown below,

- Network – A network is a single layer2 broadcast domain. Multiple Subnets and Ports are then assigned to the Network.

- Subnet – A subnet is an address block consisting of IPv4/IPv6 addresses which are allocated to the instances via DHCP.

- Port – A port is the virtual switchport upon the virtual switch. Each port contains the MAC address and IP address which is consumed by a connecting interface.

Plugins / Agents

Lets look next at plugins and agents.

The role of the Agent is to implement the request onto the Compute and/or Network nodes. These changes can come from either a plugin or directly.

Plugins are pluggable python classes that are invoked while responding to API requests, in turn providing additional features and functionality. There are 2 main types of plugins. They are,

- Core Plugin – The core plugin mainly deals with L2 connectivity and IP address management. Example : ML2 plugin.

- Service Plugin – Service plugins add additional networking features such as routing, firewalling and VPN.

ML2

The Modular Layer 2 (ML2) plugin was first introduced within the Havana release. ML2 superseded the monolithic LinuxBridge and Open vSwitch plugins. However both of the these plugins can still be configured to work with the ML2 plugin. ML2 consists of 2 drivers – the TypeDriver and MechanismDriver.

- TypeDriver – Allows for the Implementation of a range of network types, such as VXLAN, GRE and VLAN.

- MechanismDriver – Enables the correct mechanism for the implementation of the TypeDriver actions. This is achieved by performing CRUD actions on vendor platforms such as Cisco Nexus, Arista, LinuxBridge and Open vSwitch.

L2 Population Driver

The main role of the L2 population mechanism driver is to reduce L2 broadcasts when using overlay networks. This is achieved by populating the forwarding tables of virtual switches (LinuxBridge or OVS). The L2 population driver also uses proxy ARP to ensure ARP broadcasts are answered locally upon the host, in turn preventing the ARP broadcast from having to transverse the overlay tunnel.

Virtual Switches

Out of the box Neutron supports both Open vSwitch and Linux bridges. Below provides further explanation of the 2,

- Open vSwitch – OVS (Open vSwitch) is a software-based switch. There are 2 deployment modes,

- Normal – Within normal mode each OVS acts as a regular layer 2 learning switch.

- Flow – Forwarding decisions are made via the use of flows. Flows are received from a north-bound API aka controller. This is the default deployment mode.

- Linux Bridges – Synonymous to a physical switch, Linux Bridges provides layer 2 connectivity via layer 2 packet switching to multiple interfaces via the use of MAC forwarding tables.

Topology Example

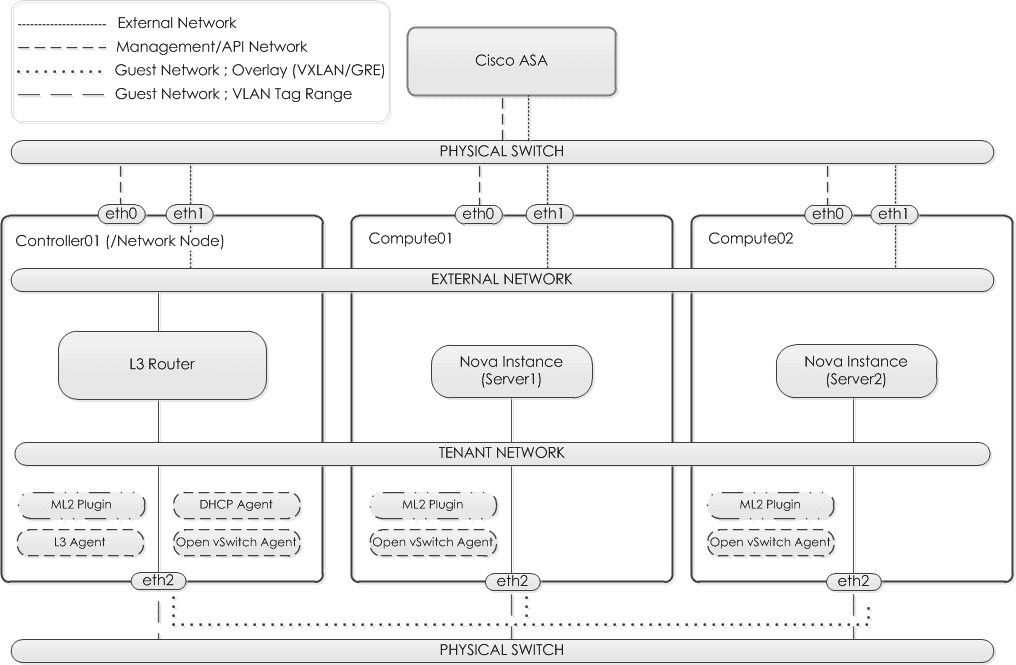

One of the hurdles that you will face when learning OpenStack Neutron is the vast amount of terminology involved. Let us take a moment to stop and reflect on what we have covered so far. Based on the diagram below here are the key points to note,

- A 3 node setup is configured. 2 compute and a combined controller/network node. Take notice of the plugins and agents shown for each node.

- The interfaces are configured as,

- Management/API (eth0) – Provides connectivity to the API services and also allows for internal connectivity between services.

- External Network (eth1) – Provides connectivity into the external network to the physical switch. In this example a trunk would be configured and VLAN100 is trunked from the External Network virtual switch up to the physical Cisco ASA.

- Guest Network ; VLAN Tagged/Overlay (eth2) – Allows for connectivity for tenant traffic between nodes. Traffic is sent between nodes using a VLAN tag or sent using VXLAN or GRE encapsulation.

In terms of traffic flow. Each instance will have its default gateway configured with the IP of the L3 Router. Traffic between Server1 and Server2 is sent via the tenant network. Traffic from Server1 to, lets say Google. Would route northbound via the L3 router, through the external network and up to the Cisco ASA where it would be routed accordingly.

Routers

A Neutron router, much like a physical router provides connectivity between networks and is also able to perform network address translation (NAT).

Prior to the Juno release only standalone routers were supported, these are also known as legacy routers. In order to create distributed routers 2 new features were introduced – VRRP and DVR.

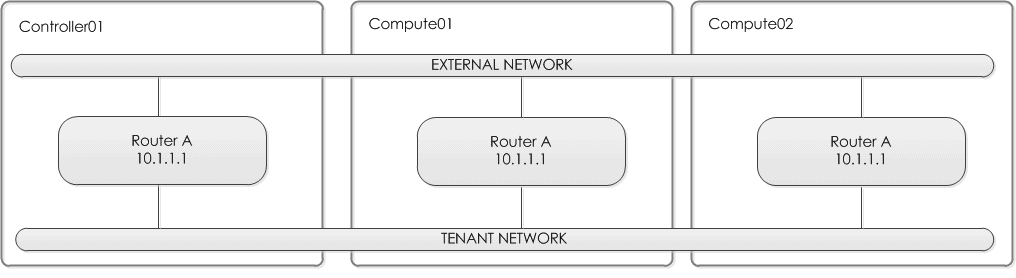

VRRP

The Virtual Router Redundancy Protocol (VRRP) allows you to increase the availability a networks default gateway. This is achieved by grouping a set of routers as one virtual router. A single router assumes the role of master and processes the traffic. At the point of failover a backup router is promoted to master.

Below shows a simplified overview of the topology,

Though configuring VRRP within OpenStack gives you greater redundancy, traffic is still routed via a single node/L3 router. This creates a potential bottleneck within the network. In addition unnecessary failovers and the potential for split-brain can occur due to connectivity issues on the internally created VRRP network.

Though configuring VRRP within OpenStack gives you greater redundancy, traffic is still routed via a single node/L3 router. This creates a potential bottleneck within the network. In addition unnecessary failovers and the potential for split-brain can occur due to connectivity issues on the internally created VRRP network.

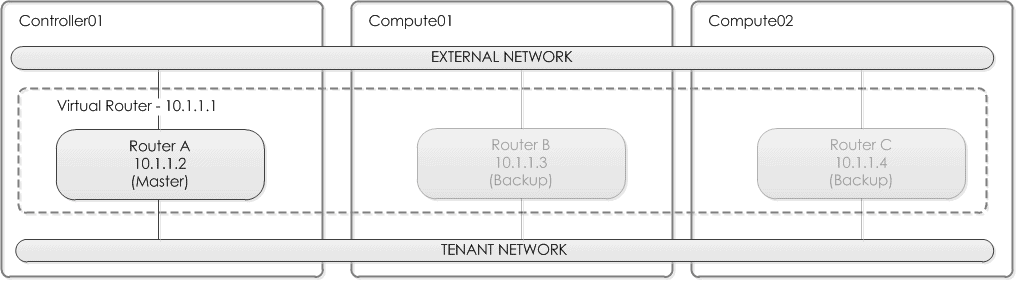

DVR

Prior to the Juno release of OpenStack the implementation of L3 Routers would result in all traffic between instances (east-west) and traffic via the floating IPs (north-sound) being sent via the network node. This in turn created bottle-necks and performance issues.

DVR (Distributed Virtual Router) implements the L3 Router across each of the compute nodes. Allowing for,

- forwarding of east-west within the local compute node.

- presentation of the floating IP namespaces on each of the compute nodes. This allows for external network traffic to route via the necessary compute node for the given instance.

Below shows a simplified overview of the topology,

NOTE One key caveat with DVR is SNAT. Due to the SNAT service not being distributed, i.e centralized. Traffic that requires source address translation must still be routed via a central snat namespace i.e via the network node.

Metering

Statistics can be collected for traffic that passes an L3 router. Labels are assigned to IP ranges which are then subject to bandwidth metering. Metering works by creating additional chains within IP tables inside of the L3 Router namespace. Metering is not configured by default. To enable the Metering extension a service plugin is installed.

LBaaS

Another feature to Neutron is Loadbalancing as a Service (LBaaS). By default Neutron uses the software based loadbalancer HAProxy. LBaaS can control either hardware or software based loadbalancers via the use of neutron plugins. This in turn allows you to configure your Loadbalancer using either the REST API or the neutron command-line interface.

Firewalling

Security groups

A security group is a set of rules uses to restrict access to and from an instance. Each security group is applied on a per port basis within each compute node. Security groups are implemented using IPtables, where each security group is added to the necessary iptable chain.

All projects have a “default” security group, this is applied to instances that have no other security group defined. Unless changed, this security group denies all incoming traffic.

FWaas

Firewall as a Service (FWaaS) provides the ability to perform packet filtering (Layer3/4) within the L3 Router. This is slightly different to Security Groups were filtering is performed on a per compute node level.

Within FWaaS the following components are used,

- Firewall – A instance of the firewall that is associated to a single firewall policy. A firewall can then be assigned to one or more L3 routers.

- Firewall Policy – A grouping of firewall rules.

- Firewall Rule – A set of conditions used to define how the traffic will be restricted. i.e should the traffic be dropped or allowed etc. This is equivalent to an ACE (Access Control Entry) in the world of Cisco.

At the time of writing the key caveat over Security Groups is the direction of traffic within the firewall rule cannot be specified.

VPNaaS

The creation and management of IPSEC VPN tunnels is available within Neutron via VPN as a Service. This allows you to peer your L3 router with another VPN gateway and encrypt traffic between the 2 points.

VPNaaS consists of the following components,

- IKE policies – Defines the phase 1 attributes.

- IPSEC policies – Defines the phase 2 attributes.

- VPN services – Defines the local encryption domain and L3 router.

- IPSec site connections – Contains associations to the IKE and IPSEC policy, as well as the VPN service. Additional, the peer address and remote encryption domain are also defined too.

NOTE As from the Kilo release VPNaaS is no longer deemed experimental.

IP Types

Within Neutron there are 2 types of IP Addresses, Fixed and Floating.

- Fixed IPs are only used for communication between instances and to external networks. They use RFC1918 addressing and are not accessible from external networks. Fixed IPs are provided to the instance at boottime.

- Floating IPs are synonymous to Static NAT i.e they provide a 1-to-1 mapping. This provides the ability for inbound connectivity from the external network into the instance.

Further Reading

I hope you have enjoyed this introduction to Neutron. If you want to learn more around Neutron then these may be of interest,

– Learning OpenStack Networking (Neutron) by James Denton – Dives deeper into Neutron. Highly highly recommended.

– How to Build an OpenStack Network using the Neutron CLI – A great introduction into building a simple network along with L3 router in the CLI.

References

[1] http://searchsdn.techtarget.com/definition/OpenStack-Neutron

[2] https://en.wikipedia.org/wiki/OpenStack

Additional Links

- http://docs.openstack.org/icehouse/training-guides/content/operator-network-node.html

- http://assafmuller.com/2013/10/13/open-vswitch-basics/

- http://docs.ocselected.org/openstack-manuals/kilo/networking-guide/content/ha-dvr.html

- http://docs.openstack.org/networking-guide

- http://www.unixarena.com/2015/10/openstack-configure-neutron-on-network-node-part-7.html

- https://wiki.openstack.org/wiki/L2population

- NETCONF & YANG: Automate Network Configs via Python - April 2, 2026

- Palo Alto – How to Configure Your Next-Generation Firewall - April 2, 2026

- How to Harden Linux SSH: Keys, Fail2ban & Ciphers - March 1, 2026

Want to become an OpenStack expert ?

Here is our hand-picked selection of the best courses you can find online:

OpenStack Essentials course

Certified OpenStack Administrator course

Docker Mastery course

and our recommended certification practice exams:

Delta Practice Tests